WHY your Instagram account is getting suspended in 2026

From innocent family photos to thriving businesses — thousands are waking up to a disabled account. Here’s the real story behind the mass ban wave, and what you can do right now.

Imagine building a 50,000-follower Instagram account over eight years — a business, a brand, a community — and waking up one morning to find it gone. No warning. No explanation. Just a one-line message: “Your account has been disabled for violating our Community Guidelines.” This is the reality thousands of creators, small business owners, and everyday users are facing right now.

The Meta Ban Wave: What Actually Happened

To understand why accounts are disappearing in 2026, you need to understand what happened in 2025. It wasn’t a glitch. It wasn’t a coincidence. It was, in the words of one analyst, “Meta’s Frankenstein moment” — a powerful AI system let loose without adequate human oversight.

May – June 2025

Meta quietly rolled out upgraded AI moderation models — reportedly LLaMA-based — designed to detect child exploitation content, hate speech, and spam at scale. Within weeks, mass suspension reports flooded Reddit and X.

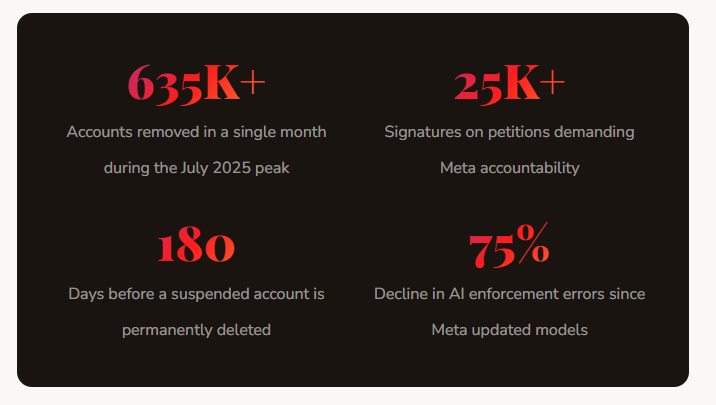

July 2025 — Peak Crisis

Nearly 635,000 accounts were removed in a single month. The AI flagged family photos, fitness content, car pictures, and business posts as serious policy violations. False “Child Sexual Exploitation (CSE)” labels devastated innocent users. A Change.org petition gathered over 25,000 signatures.

August – October 2025

Media pressure forced partial reversals. Meta acknowledged a “technical error” but offered few explanations. Law firms in California and New York began soliciting clients for class-action lawsuits. South Korea’s Meta director publicly apologised.

2026 — Ongoing

Meta claims a 75% reduction in AI errors in the US, but suspensions continue globally. New trust-score systems now govern how quickly and severely accounts are penalised — and most users have no idea their score even exists.

“This summer’s mass suspensions have been more than a technical story — they’ve been a human one. Accounts are not just profiles — they’re memory banks and cash registers.”— CEO, Social Media Experts Ltd, London

Root Causes

The Real Reasons Instagram Suspends Accounts

Suspensions in 2026 fall into two categories: legitimate policy violations that users knowingly or unknowingly commit, and AI false positives that punish innocent accounts. Understanding both is critical to protecting yourself.

🤖AI Moderation False Positives

Meta’s upgraded AI systems classify content at scale with no human review in the loop. An innocent beach photo, a fitness transformation post, or a car video can trigger severe flags — including CSE labels — simply because the algorithm misreads context. The machine acts first; humans may never review it.

📋Community Guideline Violations

Posting content that includes hate speech, nudity, graphic violence, or misinformation violates Instagram’s policies. Even unintentional violations — like using a banned hashtag without knowing it — can trigger automated action. Repeated minor violations escalate rapidly to permanent bans.

⚡Suspicious Activity & Bot Behaviour

Sudden spikes in follows, unfollows, likes, or comments — especially if done rapidly — trigger Instagram’s spam detection. Third-party apps like follower trackers, auto-likers, and mass DM tools violate Instagram’s Terms of Service and often trigger immediate account suspension when detected.

🔗Cross-Platform Meta Cascade

In 2026, a flag on any Meta platform — Facebook, Instagram, or Threads — can automatically suspend linked profiles across all three. If your accounts share the same Accounts Center, one violation can wipe out your entire Meta presence simultaneously.

🎯Coordinated Mass Reporting

Instagram’s system often auto-suspends first and reviews later when multiple users report an account in a short timeframe. This mechanism is increasingly weaponised by competitors, trolls, and bad actors who deliberately organise mass-reporting campaigns to take down rivals.

©️Intellectual Property Complaints

A single valid DMCA takedown or trademark complaint can trigger suspension if the content remains live. Even one valid copyright complaint — music in a Reel, an unlicensed logo — can escalate to full account action if not removed promptly after the first warning.

The Hidden System

Instagram’s Secret “Trust Score” — And Why It Matters

What most users don’t know is that Instagram now assigns every account an internal trust score that determines how quickly and harshly it reacts to suspected violations. The higher your trust score, the more lenient the system is. The same action that gets a new account permanently banned might only generate a warning on a verified, established account.

📊What Affects Your Instagram Trust Score

According to platform research and user reports, Instagram evaluates: login consistency (same device, same location), account age and verification status, behavioral patterns (gradual growth vs. sudden spikes), connections to previously flagged accounts, and the history of content violations or reports against your profile.

You cannot view your trust score directly. But every third-party app you connect, every automation tool you use, and every spam complaint filed against you quietly erodes it — until one day the threshold tips and your account is gone without a moment’s notice.

⚠️Critical Warning for Business Accounts

A false CSE (Child Sexual Exploitation) flag is among the most devastating outcomes — not just because of the suspension, but because of the reputational damage that follows. Even after account restoration, the accusation can harm client relationships and brand credibility. If this happens to you, act immediately with documented evidence of your content history.

How to Protect Yourself

What You Should Stop Doing Right Now

Many creators and business owners are unknowingly engaging in behaviors that put their accounts at severe risk. Here are the most common mistakes in 2026:

Using third-party follower management apps — “Who unfollowed me” tools, auto-likers, and mass DM platforms violate Instagram’s Terms of Service and are detected quickly.

Running multiple accounts from one device without isolation — Instagram allows up to 5 accounts per device, but sudden identical actions across all of them trigger coordinated inauthentic behavior flags.

Using banned or flagged hashtags — Some hashtags are permanently blocked. Using them even once can cause your post — and potentially your account — to be flagged.

Rapid follow/unfollow cycles — Aggressively following and unfollowing hundreds of accounts a day looks like bot behavior to Instagram’s AI, even if done manually.

Ignoring copyright warnings — One or two ignored takedown notices can escalate to full account disabling. Remove flagged content immediately.

Linking all Meta accounts together — In 2026, having all your Facebook, Instagram, and Threads profiles in the same Accounts Center means one flag can cascade across all of them.